The Distillation Wars: Espionage or Efficiency?

Summary

Summary

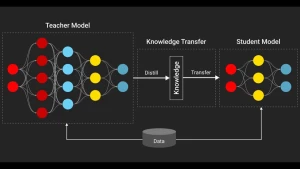

AI startup Anthropic claimed that Chinese labs DeepSeek, Moonshot AI, and MiniMax conducted "industrial-scale distillation attacks" against its Claude chatbot, using 24,000 fake accounts and over 16 million API queries to steal reasoning and coding abilities. Anthropic framed this as a national security threat, warning that distilled models lack safeguards against misuse in bioweapons or cyberoffense, and urged tighter U.S. export controls. Model distillation is a standard, legitimate technique where a smaller "student" model learns from a larger "teacher" model's outputs; Anthropic and OpenAI routinely use it themselves. Critics argue that the volume of queries cited (16 million exchanges) is comparable to routine large-scale testing, and that MiniMax was caught while training its upcoming model. Furthermore, the accusation faces hypocrisy claims, as Anthropic itself has faced lawsuits and settled with authors over allegedly scraping copyrighted material like books and music to train its own frontier models, leading to the critique: "data for me, but not for you."

(Source:Aisuperhuman Substack)